Note: While Shannon’s entropy equation and chess work are well-documented, the specific formula (p – p’) log C is my synthesis with help of AI, applying his principles to chess advantage. This essay’s original contribution is revealing the shared mathematical architecture underlying strategic surprise across domains.

Foreword – A short, math-free key to everything that follows

Everything you are about to read boils down to one idea that Claude Shannon discovered while trying to make telephone lines clearer:

The power of any move, tactic, joke, feint, or betrayal is measured by only one thing: how violently it forces someone’s sense of “what happens next” to collapse from many safe possibilities into one brutal reality.

We feel that collapse as shock, laughter, or checkmate.

Chess, war, comedy, romance, markets, espionage: they are all the same game underneath; whoever masters the creation and destruction of uncertainty wins.

The scary equation in the title is just the cleanest way anyone has ever found to say it.

Turn the page, and you’ll see that single idea light up every famous sacrifice, ambush, and punchline in history.

By Narinder — Pao Of Physics

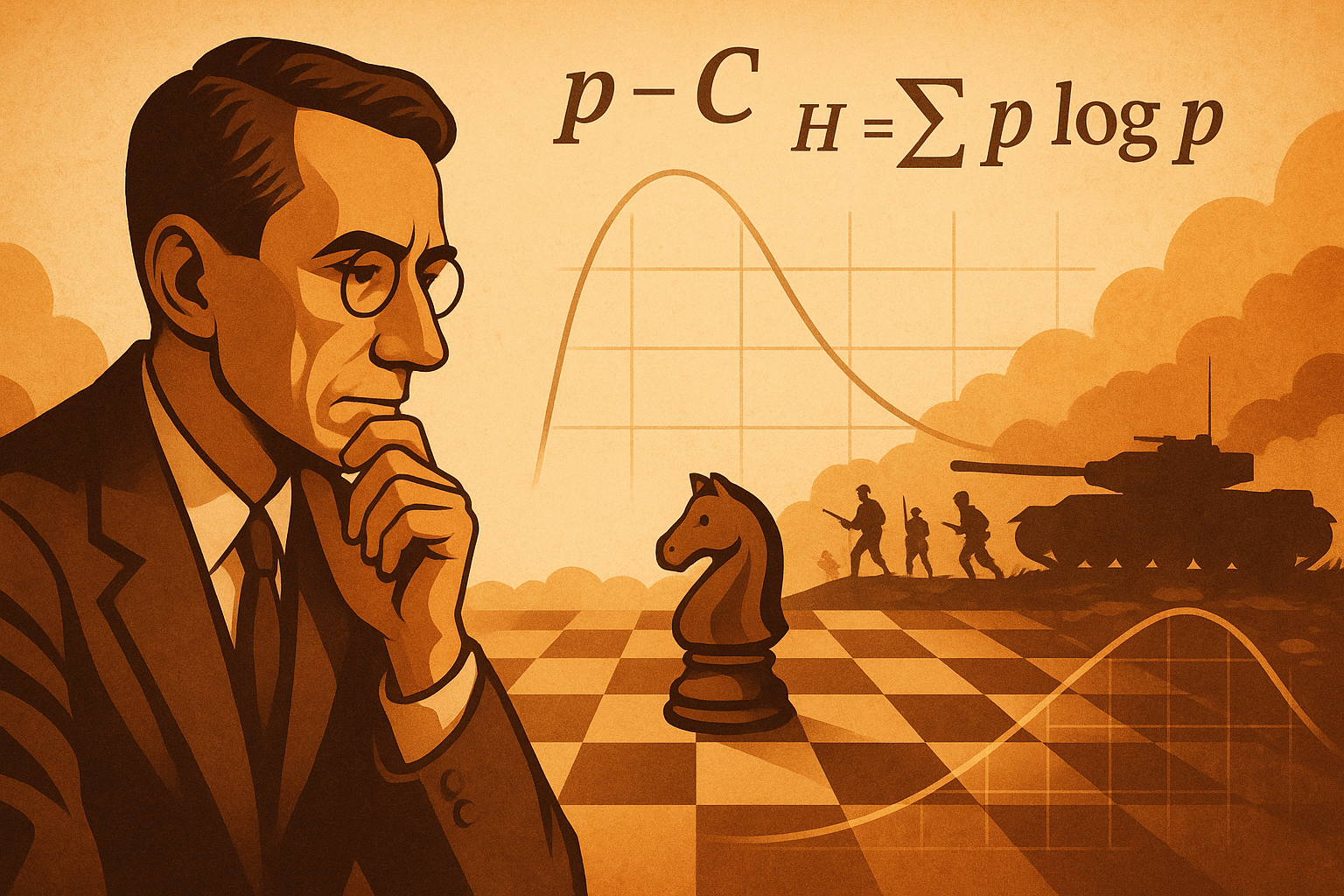

Prologue: The Man Who Measured Surprise

Claude Shannon gave the world the concept of entropy, the mathematics of surprise:

H = -Σ p log p

If a thought is likely, it produces little surprise and low entropy. If a move, message, or event is rare, its surprise is high. Shannon’s genius was to show that intelligence—human or machine—lives on this curve of expectation versus shock.

This same logic appears in his chess model and then in Dupuy’s battlefield models.

1. Entropy: The Mathematics of Surprise

Predictable events carry no information. Rare events carry maximum information. Entropy measures:

– surprise in communication

– surprise in thought

– surprise in decision-making

– surprise in conflict

Entropy is possibility. It powers chess, war, creativity, madness, and strategy.

2. Shannon’s Chess Equation: (p – p′) × log C

Shannon applied entropy logic to chess:

Advantage ≈ (p – p’) log C

p = probability your plan succeeds

p′ = probability the opponent’s plan succeeds

C = branching complexity of the position

log C is the entropy of options.

(p – p′) is the surprise differential.

Every tactic is an entropy spike. Every blunder is entropy collapse.

Shannon used the pawn as the base unit of power. All evaluations—material, initiative, mobility—reduce to pawn-equivalents.

The pawn is the currency of conflict on the chessboard.

4. Dupuy Does the Same for War: Lethality as Currency

Trevor Dupuy expressed all combat power using lethality-equivalents.

A nuclear blast = X rifles

A tank regiment = Y rifle-equivalents

A morale advantage = Z% combat power multiplier

His combat model:

P = N V Q

N = numbers

V = weapons

Q = human quality (cohesion, morale, leadership, initiative)

Q is human entropy. Shannon’s surprise made flesh.

Dupuy’s Residual Power Theorem:

P_r = P_0 e^{-kL}

Loss reduces options. Reduced options collapse entropy. Armies break suddenly, exponentially.

5. Entropy as the Secret Grammar of Warfare

Surprise attacks are entropy spikes.

Intelligence failures are entropy blind spots.

Urban operations raise entropy.

Mountain warfare collapses entropy.

Blitzkrieg increases entropy faster than defenders can respond.

All conflict is a battle for surprise under constraints.

6. The Law Beneath It All: Compound Interest

Small advantages accumulate.

Small errors accumulate in reverse.

Compound interest explains:

– positional advantages in chess

– rising operational momentum

– morale spirals

– collapse curves

– creative cascades

– cognitive spirals

– societal changes

– tectonic pressure

– probability drift

Small × repetition = transformation.

Coda: From Shannon’s Board to Dupuy’s Battlefield

Entropy measures surprise.

Chess measures differential surprise.

Dupuy measures human response to surprise.

War reveals entropy collapse.

Shannon wrote the equation.

Dupuy weaponised it.

We are still learning to read it.

Summary

Simple, math-free summary of the core

Shannon’s famous equation H = –Σ p log p looks like scary math, but it is really just a precise way to measure one thing: how unpredictable a situation is.

- When a position (in chess, on a battlefield, or in life) has only one good choice, uncertainty is zero. Everyone knows what’s coming; no surprise is possible.

- When there are dozens of moves or plans that all look reasonable, uncertainty is sky-high. The player (or commander) is drowning in possibilities and can easily pick the wrong one.

The magic happens when someone deliberately pushes their opponent into that high-uncertainty fog while keeping their own plan crystal clear. A brilliant chess sacrifice, a perfectly timed ambush, or a market move that no one saw coming all do the same thing: they force the other side’s mental model of the world to collapse from “I have many safe options” to “I’m suddenly lost” in a single moment.

That sudden collapse is what we feel as surprise. The bigger the collapse, the more shock, awe, or admiration we experience.

In short: Chess, poker, warfare, comedy, romance, and every other contest of minds are not fundamentally about pieces, guns, or words. They are entropy wars — whoever can create more confusion for the opponent while staying calm and focused inside their own low-entropy bubble usually wins.

Shannon didn’t set out to explain strategy or drama in 1948. He was just trying to send telephone signals without noise. Yet he accidentally discovered the hidden DNA that runs through every human conflict where surprise is the ultimate weapon.

That single equation quietly explains why a quiet novelty on move 15 can make a grandmaster sweat, why the Trojan Horse worked, and why the most dangerous generals and comedians have always understood the same secret: Control the uncertainty, control the game.